What do a child and a neural network have in common? Both are at an early age of their development, and both come up with delightfully unexpected responses to the data they take in. Scientist and neural network pop artist Janelle Shane brings that issue to life in her artistic neural network projects, in which computer brains come up with strange names for paint colors, tell bizarre knock-knock jokes, and generally prove that mimicking the human brain is not so easy. Shane shares with us what inspires her, how she creates her neural network art and more.

RECURSOR: Tell us about your day job.

Janelle Shane

SHANE: I’m doing work now on holographic laser beam steering — using a computer generated hologram to take a single laser beam and turn it into hundreds of independently steerable laser beams. There are research groups that have engineered mice whose neurons are sensitive to light, so if you zap a neuron with a beam of light, that neuron will fire. If you’re able to zap hundreds of neurons and make them fire at will, you can do repeatable experiments looking at the connectivity in a mouse brain and learning more about how the neural circuits are organized. Using the holographic laser beam steering is a really nice way of delivering these beams to the neurons when you need them.

How did you become interested in the neural network projects you do?

As a freshman at Michigan State University, I started out doing research in genetic algorithms. I had listened to a really cool talk by Professor Erik Goodman. He tried to train a genetic algorithm to make a car bumper that would crumple in such a way that it could protect the occupants. It arrived at a solution that worked really well, but nobody understood why it worked because it wasn’t using any design principles that humans input. I thought that was really cool.

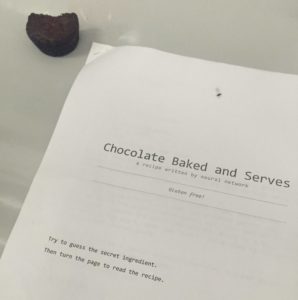

Chocolate peanut butter batter, to which Janelle was about to add horseradish because neural network recipe suggested it.

Another time, he tried to solve a physics problem with a genetic algorithm. It evolved a solution that was amazingly good. He found out he hadn’t told it you couldn’t put all the atoms in the exact same point in space and have them occupying a singularity. I thought that was really interesting as well.

I’ve always been intrigued by machine learning, and it was a little over a year ago that I read about neural networks. I’d come across these lists of cookbook recipes that Tom Brewe had generated via neural network. I thought they were great, so I wanted to learn to make more of them.

Has your neural network produced anything eye-opening for you?

Neural network story name illustration inspired by Shane’s neural network. (Original artwork by Tumblr user Everyzig314, found here).

I had wondered if a neural network could learn to generate pickup lines. I thought it would be kind of funny. As I was collecting the data set, I started to regret the whole project because these pickup lines were terrible. They’re bad puns or they’re insulting or they’re kind of aggressive. I thought, why am I training a neural network to generate more of these?

But the neural network came up with these charming statements — like “You are so beautiful that you make me feel better to see you,” and “You look like a thing, and I love you.” It really surprised me that it managed to extract meaning out of these things and that the meaning was so simple and disarmingly charming.

Do you consider your projects to be art, science or both?

These neural network experiments are definitely closer to the art and literature side of things than the science side of things. I’m using a relatively simple neural network framework and applying it to different data sets, but I’m not doing systematic experiments and trying to extract something that could be used to improve the algorithms.

In some ways, when it’s generating hilarious nonsense, that’s my goal. The mistakes are actually what I’m interested in — seeing what kind of interesting mistakes the neural network comes up with.

A chocolate horseradish brownie that someone took a bite of, then abandoned.

Can you give us an example?

The neural network’s whole process of trying to learn the format of knock knock jokes! It was really funny to see all the mistakes it made along the way and the strange situations it would get itself into, then actually formulating its original knock knock joke that has a pun that actually works:

Knock Knock

Who’s there?

Alec

Alec who?

Alec knock knock jokes.

I would definitely say there’s art in there.

How about the science aspects of what you’re doing?

It’s a powerful outreach tool. I’ve been contacted by a lot of people who had not heard of neural networks before but now want to start experimenting with them. And I’ve heard from high school students and computer science freshmen who are all saying, “This is something I can get started on. How do I do this?” I’ve really liked that aspect of it as well.

Do you have any favorite sci-fi or fantasy books or movies?

My favorite fantasy/sci-fi is Ursula LeGuin’s writing. Each of her worlds is so rich and so complex. It’s absolutely jaw-dropping to start one of her short stories, and in a couple of paragraphs she’s built this immersive world where everybody thinks and acts and lives as if they had grown up in this very different world. There’s this ability to think in a different way, to try and imagine being brought up without some of the same cultural surroundings that we have now. I find that really refreshing.

In a way, that’s what I’m probing with the neural network. What can it come up with if it just knows maybe how to write a few English words but doesn’t have a lot of assumptions.

Janelle Shane

Janelle Shane is a research scientist working in industry R&D in optics, biophotonics, and beamsteering. In her spare time, she trains neural networks and posts the results on her Tumblr blog.